The Econometricians

Great Minds in Finance

Series Editor

- Professor Colin Read

- Professor of Economics and Finance

- former Dean of the School of Business and Economics

- The State University of New York at Plattsburgh (SUNY) , USA

Aims of the Series

Th is series explores the lives and times, theories and applications of those who have contributed most signifi cantly to the formal study of fi nance. It aims to bring to life the theories that are the foundation of modern fi nance, by examining them within the context of the historical backdrop and the life stories and characters of the ‘great minds’ behind them. Readers may be those interested in the fundamental underpinnings of our stock and bond markets; college students who want to delve into the signifi cance behind the theories; or experts who constantly look for ways to more clearly understand what they do, so they can better relate to their clients and communities.

More information about this series at http://www.springer.com/mycopy/series/15025

The Econometricians

Gauss, Galton, Pearson, Fisher, Hotelling, Cowles, Frisch and Haavelmo

Colin Read

- Professor of Economics and Finance

- former Dean of the School of Business and Economics

- The State University of New York at Plattsburgh (SUNY) , USA

Great Minds in Finance ISBN 978-1-137-34136-5 ISBN 978-1-137-34137-2 (eBook) DOI 10.1057/978-1-137-34137-2

Library of Congress Control Number: 2016948000

© Th e Editor(s) (if applicable) and Th e Author(s) 2016

Th e author(s) has/have asserted their right(s) to be identifi ed as the author(s) of this work in accordance with the Copyright, Designs and Patents Act 1988.

Th is work is subject to copyright. All rights are solely and exclusively licensed by the Publisher, whether the whole or part of the material is concerned, specifi cally the rights of translation, reprinting, reuse of illustrations, recitation, broadcasting, reproduction on microfi lms or in any other physical way, and transmission or information storage and retrieval, electronic adaptation, computer software, or by similar or dissimilar methodology now known or hereafter developed.

Th e use of general descriptive names, registered names, trademarks, service marks, etc. in this publication does not imply, even in the absence of a specifi c statement, that such names are exempt from the relevant protective laws and regulations and therefore free for general use.

Th e publisher, the authors and the editors are safe to assume that the advice and information in this book are believed to be true and accurate at the date of publication. Neither the publisher nor the authors or the editors give a warranty, express or implied, with respect to the material contained herein or for any errors or omissions that may have been made.

Printed on acid-free paper

Th is Palgrave Macmillan imprint is published by Springer Nature Th e registered company is Macmillan Publishers Ltd. London

Preamble

This book is the seventh in a series of discussions about the great minds in the history and theory of fi nance.

The series describes the contributions of those remarkable individuals who expanded our understanding of the underpinnings and theories of modern fi nance. While earlier volumes discussed those who described the importance of growth and interest rates on our economic decisions, described the methods by which we choose assets for our portfolios, discussed whether markets are effi cient, and described the roots and applications of public fi nance, this volume treats the statistical and econometric tools that provide the foundation of all fi nance theory.

The statisticians and econometricians who we describe here collectively created the framework and the techniques with which we have viewed fi nance ever since. Th eir tools were, at times, developed to solve problems that were intuitively unrelated to fi nance, but which were eventually tailored to the unique needs of the discipline. A series of great minds developed their concepts well before theorists and practitioners had access to modern computing, so their theories were necessarily intuitive and geometric, and easy to apply with a pencil and paper and some incredible imagination. While the technological limitations of their day had initially limited and somewhat rigidly defi ned how we now view our fi nancial variables and analyses, the limitations also off er a simplicity and create a vocabulary that is most accessible.

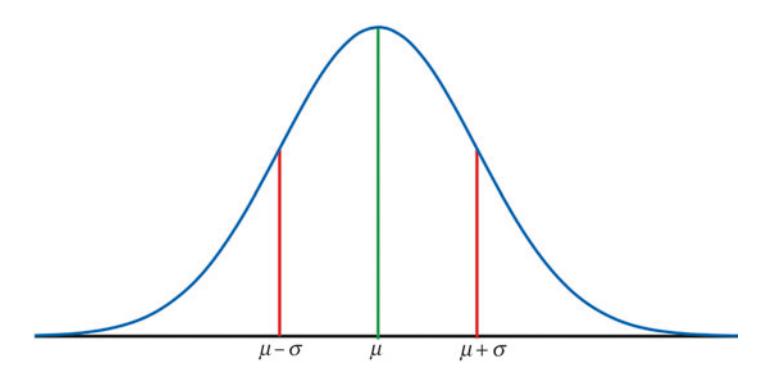

The conceptual framework began with the work of Carl Friedrich Gauss , in his eff orts to describe not only the movement of the planets but also the valuation of a widow’s pension annuity. His commonsense and intuitive approach is now one of the earliest taught statistical concept, and his Gaussian “normal” distribution now underpins much of fi nancial theory. Indeed, his method of least squares , the linear regression model, the concept of maximum likelihood, and the description of data based on its mean and variance have been broadly applied to fi nance problems for the past century, and is the foundation for the courses in statistics we take in our fi nance programs.

Gauss’ contribution was further formalized by the work of Sir Francis Galton in his seemingly unrelated development of the eugenics movement, by Galton’s prodigy, Karl Pearson, the fi rst modern statistician, and by Sir Ronald Fisher , and his further characterizations of Gauss’ distribution. Th en, Harold Hotelling took this burgeoning study of statistics to better understand and discern trends in fi nancial data. Th e totality of their contributions now represents the basis for the introduction to statistical methods studied by every fi nance student ever since.

In particular, the characterization of the properties of various statistics which summarize and represent fi nancial data was an essential step in proving our confi dence in models meant to represent or predict data. Here, Gauss got us started, and so much more. Th e next step was in the construction of mathematical models which can predict our data. Th e enigmatic Francis Galton took the statistical methods formulated by Gauss and produced the intuition for the now familiar and widely employed linear regression model.

Sir Galton fomented a revolution. Th e scientifi c method had advanced humanity’s knowledge manifold in the previous couple of centuries. Galton brought a new level of sophistication to experimental technique and to social sciences. He also produced the foundation for the linear regression model that others would use as a basis for a revolution in analysis and policy making. But, his contribution, while intuitive and important, was incomplete and lacked suffi cient formality. His prodigy, Karl Pearson, added rigor and proposed a multitude of statistical measures still employed today. Th en, the brilliant Sir Ronald Fisher parlayed the poor eyesight he suff ered as a young boy into a geometric interpretation of the statistics Pearson proposed and produced an axiomatic and formal body of results that established modern statistics as a legitimate body of mathematics.

All these individuals were either astrophysicists, geneticists or applied mathematicians, though. None of them understood the particular problems that fi nance theory invoked. Nor did any of these great minds spend time analyzing the data sets necessary to turn the art of fi nance into the science that is today. Th e next necessary leap was to take these tools of mathematical statistics and apply them to problems in fi nance and economics. Th e Great Mind Harold Hotelling was instrumental in expanding the work of Fisher and in bringing it to new and receptive scholars in the USA. However, the time series data so prevalent in fi nance presented peculiar problems. Th e rapid development of the specialized tools of econometrics and fi nancial statistics required a fresh approach.

An heir to a newspaper empire was one of the fi rst to realize that these techniques could be recast on a systematic basis to treat time series data that was particularly relevant to fi nance. While not necessarily a trained scientist himself, Alfred Cowles III was nonetheless an entrepreneur who viewed his role as one who could create an environment for others more scientifi cally skilled to vaunt forward the new disciplines of fi nance and econometrics. He did so by forming an institution that would attract some of the greatest minds in the history of fi nance. Th ese include the Great Minds Milton Friedman , Kenneth Arrow, Jacob Marschak , Harry Markowitz and others.

Two such luminaries who accepted Cowles ’ intuition and largesse were Ragnar Frisch and Trygve Haavelmo , a pair of great minds the Nobel Memorial Prize Committee eventually recognized as the founders of modern econometrics and fi nancial statistics. Th eir extension of statistics to the creation of the more specialized fi eld of econometrics signifi cantly expanded the sophistication and robustness of empirical fi nancial models ever since.

Cowles , Frisch and Haavelmo also established a research agenda that would result in the awarding of Nobel Memorial Prizes to almost a dozen subsequent contributors to the foundations of modern fi nance, most of whom are chronicled elsewhere in this series. Collectively, these Great Minds established the foundation in fi nance that all fi nancial theorists have since followed.

Through their contributions, our theories of finance could be compared to and validated against real-world data. Th ey have allowed subsequent scholars to improve, or perhaps reject their models, have permitted policy makers to off er better public fi nance tools, and have allowed practitioners to discover and tease value from the vast reams of data generated from fi nancial markets. In doing so, these Great Minds helped make the art of investing a modern science.

Preface to the Great M inds in Finance Series

When one mentions the word “finance” to an interested and engaged listener, people respond in a variety of ways. Th e word may elicit a yawn from those who think of fi nance as the mundane process of ensuring the family savings will allow them to maintain their familiar level of consumption in their retirement years. Students of fi nance, at college or in life, think of the term as a mechanism for a battle of wits, with buyers and sellers of securities pitting themselves against each other to see who can profi t best from the same information. A banker might think of the conservative practices one employs with shareholder and depositor money by lending it back out to trustworthy businesses in the region, hopefully to earn a profi t. And tax accountants and lawyers may think of the myriad of ways a corporation can organize to maximize owners’ profi ts and minimize risk. Listeners often prefer to relegate the intricacies of fi nance to an expert, as they would their legal or medical aff airs.

Most people use the terms economics and fi nance synonymously. Th is misconception is understandable. Th e formal discipline of economics defi nes the laws or principles that govern the choices we make in meeting our needs. Th e term economics is derived from the Greek word “oikos,” meaning environment but also referring to one’s house or life. It is combined with “nomics” from the Greek word “nomos,” or “law of,” to label the social science that studies our decisions in furthering our own interests.

Th ese “economic” decisions are primarily thought of as fi nancial because they often involve money. Households attempt to manage their income and wealth to ensure they are able to consume, in the present and the future, in ways that allow them to thrive. Such careful fi nancial decisions that will govern our consumption now and in retirement are so critical for our well-being that it is natural for most people to consider fi nance as economics even though, more correctly, fi nance is a branch of economics that has great relevance in the day-to-day and livelihooddefi ning decisions of us all.

Th is series describes the ancestry, life, times, theories and legacies of the great minds who contributed to the modern formal study of fi nance. Th eir collective contributions address the various interpretations of fi nance not through dry exposition and even drier equations, but through intuition and context, their lives and times, and a few equations and diagrams that each developed to revolutionize fi nancial thought.

Readers may be those interested in the fundamental underpinnings of our stock and bond markets, college students who want to delve into the signifi cance behind the theories, and the experts who constantly look for ways to more clearly understand what they do so they can better relate to their clients and communities. Th e series provides important insights of great minds in fi nance within a context of the events that inspired their moments of brilliance. In doing so, I hope to bring life to the theories that are the foundation of modern fi nance.

Th is series covers the gamut of the study of fi nance, typically through the lives and contributions of great minds upon whose shoulders the discipline stands. From the signifi cance of fi nancial decisions over time and through the cycle of one’s life, to the ways in which investors balance reward and risk, from how the price of a security is determined to whether these prices properly refl ect all available information, we will look at the fundamental questions and answers in fi nance. We delve into theories that govern personal decision-making, those that dictate the decisions of corporations and other similar entities, and the public fi nance of government.

Some of the theories we describe may appear abstract and narrow. A successful theory must be suffi ciently narrow to make strong conclusions. A theory that is overly general will draw the weakest of conclusions that off er little utility. On the contrary, the best theories draw the strongest possible conclusions from the weakest set of assumptions. And, a successful “unifying” theory in fi nance can replace a large number of lesser theories and concepts, just as physicists hold out for a unifying theory that can draw together their isolated understandings from a variety of specialties.

By focusing on the great minds in fi nance, we draw together the concepts that have stood the test of time and have proven themselves to reveal something about the way humans make fi nancial decisions. Th ese principles that have fl owed from individuals who are typically awarded the Nobel Memorial Prize in Economics for their insights, or perhaps shall be awarded someday, allow us to see the fi nancial forest for the trees.

While one might assume that every fi nancial expert would be well versed in these fundamentals, such is not the case. An investor can succeed through sheer intuition without having studied the insights of theorists over a century of fi nancial discovery. Mathematicians and physicists are increasingly employed to develop techniques that recognize patterns in numbers with little regard or understanding of the underlying forces that explain these patterns. And, computer experts can design algorithms that allow great banks of servers to constantly poke and prod the market to induce, and then profi t from, movements in prices of stocks or bonds. By capitalizing on such shifts in prices milliseconds before others take notice, these algorithms can garner pennies, or fractions of pennies, at a time, thousands of times an hour, to yield huge profi ts.

Th ese practitioners do not depend on, or even care about, the fundamental principles that drive markets in the long run. To them, the long run expires within a week or a day. Such “technical analysis” is decidedly transient and short term. In fact, a steady and predictable investment opportunity based on well-known and well-understood information is simply insuffi ciently volatile to yield quick profi ts.

Unfortunately, such technical analysis that depends only on price dynamics in the short term has emerged as the lucrative Holy Grail of modern fi nance. It allows the most skilled practitioners to make money when markets are rising or falling. However, it reveals nothing about how fi nancial decisions should be made in the long run to satisfy an economy’s need for capital, investment, reward and reduced risk. Nor does it make our economy more effi cient. Rather, technical analysts devote a great deal of talent, energy and eff ort as they clamor for others’ pieces of a fi xed economic pie.

Th e giants who have produced the theories and concepts that drive fi nancial fundamentals share one important characteristic. Th ey have developed insights that explain how markets can be used or tailored to create a more effi cient economy. Th ey demonstrate how individuals can trade risk and reward in the same way that a supplier might trade with a consumer of a good. Th rough this process, both sides win. Greater effi ciency is a tide that lifts all boats. Th ese pioneers of fi nance explain how tools can be used to create greater market effi ciency and even suggest the creation of new tools to create effi ciency enhancements that may have proven elusive otherwise.

Global fi nancial markets are experiencing a technological revolution. From a strictly aesthetic perspective, one cannot entirely condemn the tug-of-war struggle for profi ts the technicians seek, even if they do little to enhance, and may even detract from, effi ciency. Th e mathematics and physics of price movements and the sophistication of computer algorithms are fascinating in their own rights. Indeed, my university studies began with a Bachelor of Science degree in physics, followed by a PhD in economics. However, as I began to teach economics and fi nance, I realized that the analytic tools of physics that so pervades theories of modern fi nance has strayed too far from explaining the essence of human fi nancial decision-making.

As I taught the economics of intertemporal choice, the role of money and fi nancial instruments, and the structure of the banking and fi nancial intermediaries, I also recognized that my students had become increasingly fascinated with investment banking and Wall Street. Meanwhile, the developed world experienced the most signifi cant breakdown of fi nancial markets in almost eight decades. I realized that this once-ina-lifetime global fi nancial meltdown arose because we had moved from an economy that produced stuff to one in which a third of all profi ts by 2006 in the USA were made in the fi nancial industry, with little to show but pieces of paper representing wealth that had value only if some were ever ready to buy them.

Many were surprised by the Global Financial Meltdown that soon followed. It became clear that much of our fi nancial understanding lacked perspective. I set out to discover that perspective and research the theories that underpin modern fi nance, with the goal of forming a better understanding how great fi nancial concepts were created. I decided to shift my research from academic research in esoteric fi elds of economics and fi nance and toward better understanding of markets on behalf of the educated public. I began to write a regular business column and a book that documented the unraveling of the Great Recession. Th e book, entitled Global Financial Meltdown: How We Can Avoid the Next Economic Crisis , described the events that gave rise to the most signifi cant economic crisis in our lifetime. I followed that book with “Th e Fear Factor” that explained the important role of fear as a sometimes constructive, and at other times destructive, infl uence in our fi nancial decision-making. I then wrote a book on why many economies at fi rst thrive, and then struggle to survive in Th e Rise and Fall of an Economic Empire . Th roughout, I try to explain the intuition and the understanding that would, at least, help readers make informed decisions in increasingly volatile global economies and fi nancial markets.

In this series of great minds in fi nance, I off er a historical perspective on how the discipline of fi nance developed. I also hope to impart to you how individuals born without great fanfare can be regarded as geniuses, often in their lifetime but sometimes not until years later. Th e lives of each of the individuals treated in this series become extraordinary, not because they made an unfathomable leap in our understanding, but rather because they looked at something in a diff erent way and caused us all to forever look at the problem in this new way. Th at is the test of genius.

Contents

| Part I | Mathematicians and Astronomers | 1 |

|---|---|---|

| 1 | Th e Early Life of Carl Friedrich Gauss | 3 |

| 2 | Th e Times of Carl Friedrich Gauss | 11 |

| 3 | Carl Gauss’ Great Idea | 33 |

| 4 | Th e Later Years and Legacy of Carl Friedrich Gauss | 57 |

| Part II | From Least Squares to Eugenics | 65 |

| 5 | Th e Early Life of Francis Galton | 67 |

| 6 | Th e Times of Francis Galton | 75 |

| 7 | Th e Later Life and Legacy of Sir Francis Galton | 81 |

| 8 | Th e Early Life of Karl Pearson | 83 |

| 9 | Karl Pearson’s Great Idea | 89 |

| 10 | Th e Later Life and Legacy of Karl Pearson | 99 |

| Part III | Th e Formation of Modern Statistics | 109 |

| 11 | Th e Early Life of Ronald Aylmer Fisher | 111 |

| 12 | Th e Times of Ronald Aylmer Fisher | 121 |

| 13 | Ronald Fisher ’s Great Idea | 129 |

| 14 | Later Life and Legacy of Ronald Fisher | 139 |

| 15 | Th e Early Life of Harold Hotelling | 149 |

| 16 | Th e Times of Harold Hotelling | 159 |

| 17 | Harold Hotelling ’s Great Idea | 165 |

| 18 | Th e Later Life and Legacy of Harold Hotelling | 171 |

| Part IV | Th e Birth of a Commission and Econometrics | 175 |

| 19 | Th e Early Life of Alfred Cowles III | 177 |

| 20 | Th e Times of Alfred Cowles III | 187 |

| 21 | Th e Great Idea of Alfred Cowles III | |

| 22 | Legacy and Later Life of Alfred Cowles III | |

| 23 | Th e Early Life of Ragnar Frisch | |

| 24 | Th e Times of Ragnar Frisch | |

| 25 | Ragnar Frisch’s Great Idea | |

| 26 | Legacy and Later Life of Ragnar Frisch | |

| 27 | Th e Early Years of Trygve Haavelmo | |

| 28 | Th e Times of Trygve Haavelmo | |

| 29 | Haavelmo ’s Great Idea | |

| 30 | Legacy and Later Life of Trygve Haavelmo | |

| Part V | What We Have Learned | |

| 31 | Conclusions | |

| Glossary | 251 | |

| Index | 257 |

List of Figures

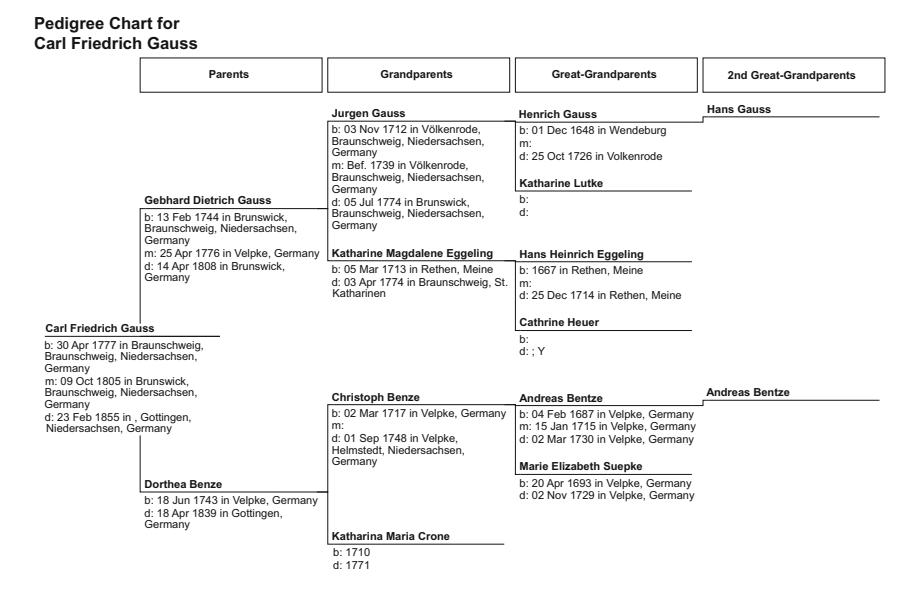

| Fig. 1.1 | Th e ancestry of Carl Friedrich Gauss | 5 |

|---|---|---|

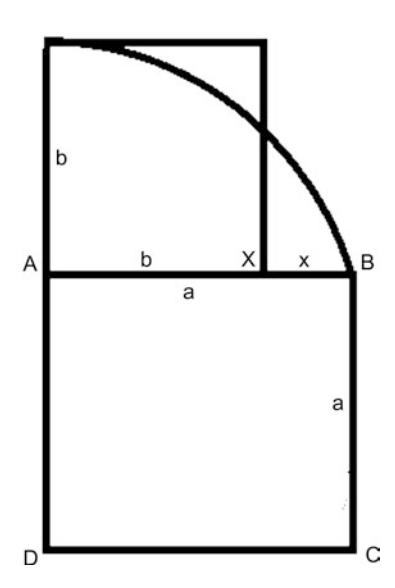

| Fig. 2.1 | Th e calculation of geometric means | 14 |

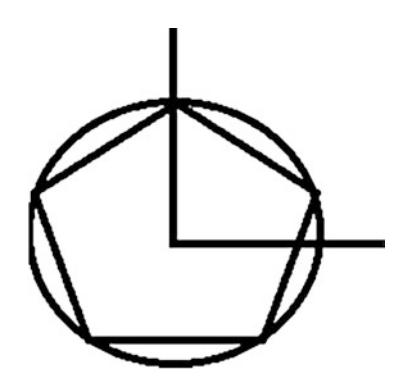

| Fig. 2.2 | Th e pentagon in a unit circle | 15 |

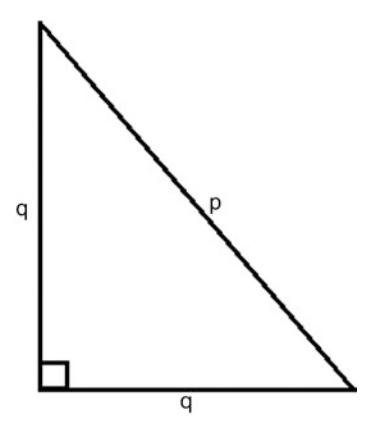

| Fig. 2.3 | An isosceles triangle of hypotenuse p and adjacent | |

| and opposite sides q | 18 | |

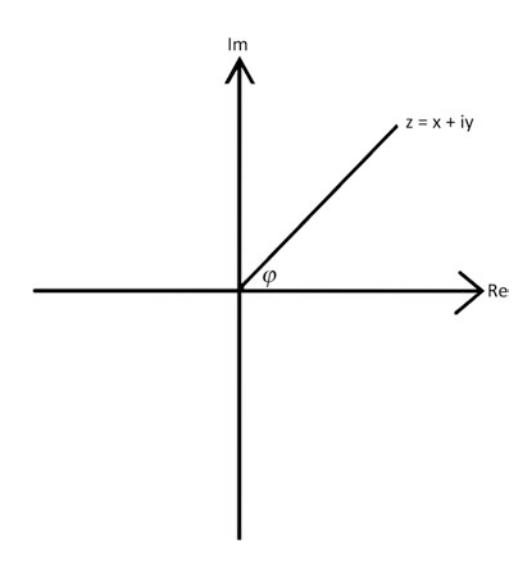

| Fig. 2.4 | Th e complex plane | 24 |

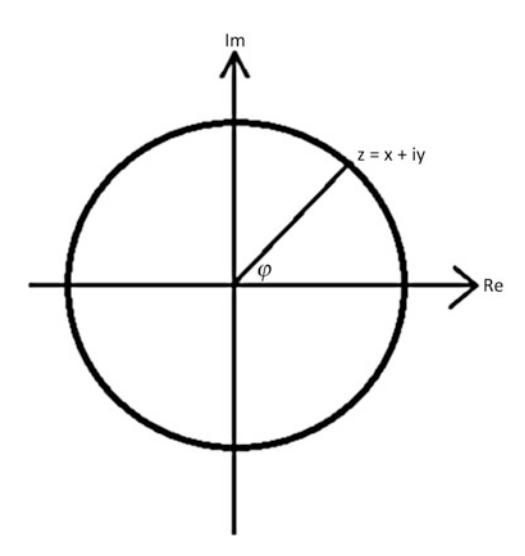

| Fig. 2.5 | Th e unit circle on the complex plane | 26 |

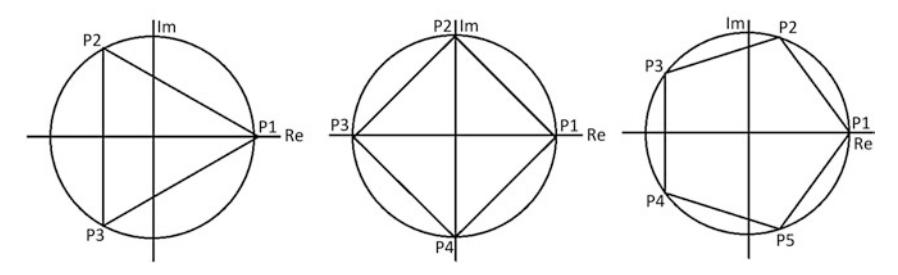

| Fig. 2.6 | Regular polygons in the unit circle on the complex plane | 28 |

| Fig. 3.1 | Th e Gaussian distribution | 53 |

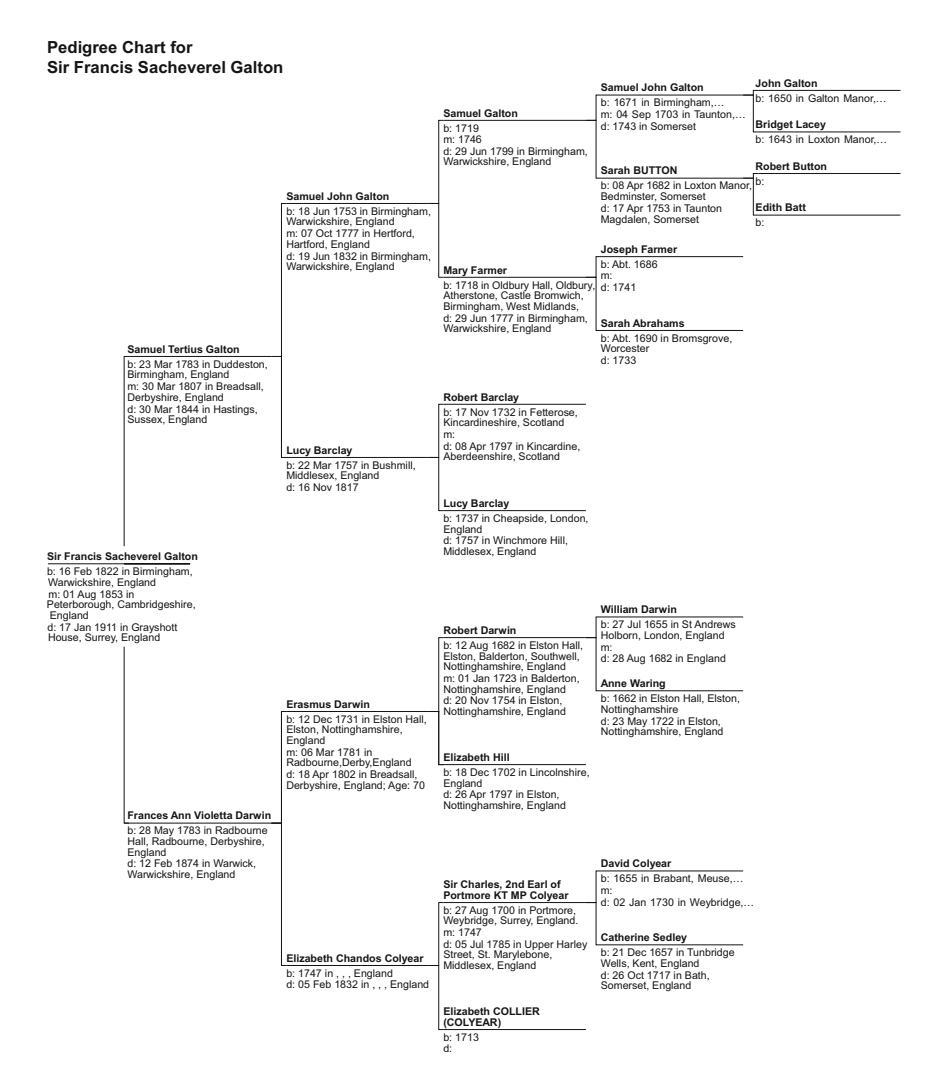

| Fig. 5.1 | Th e ancestry of Francis Galton | 69 |

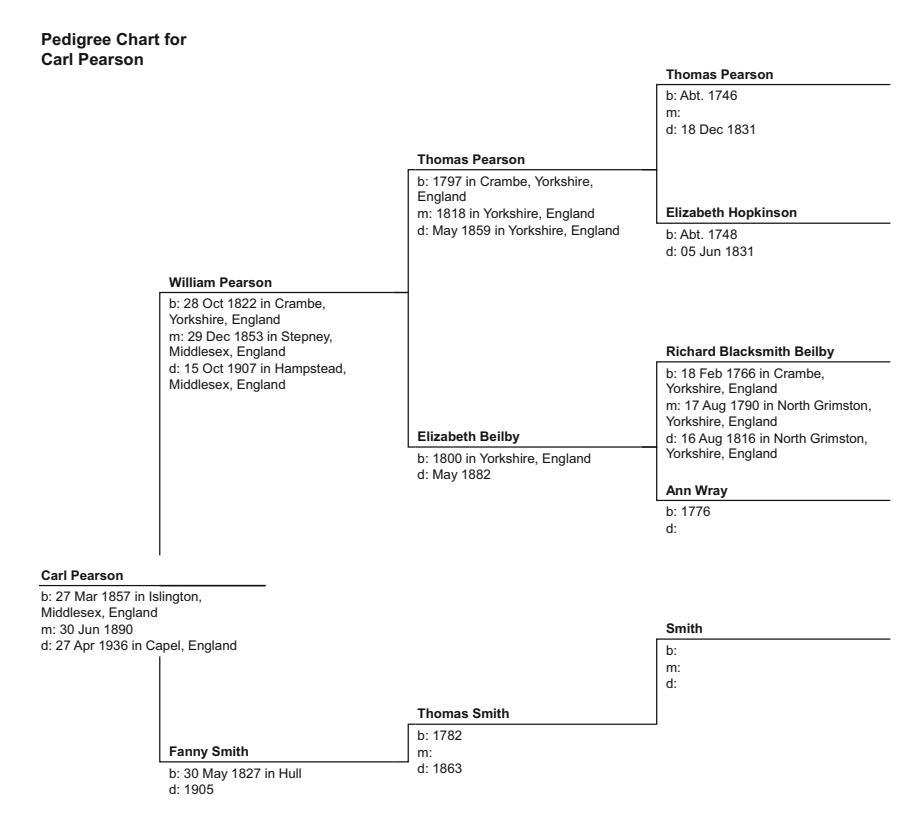

| Fig. 8.1 | Th e ancestry of Carl Pearson | 85 |

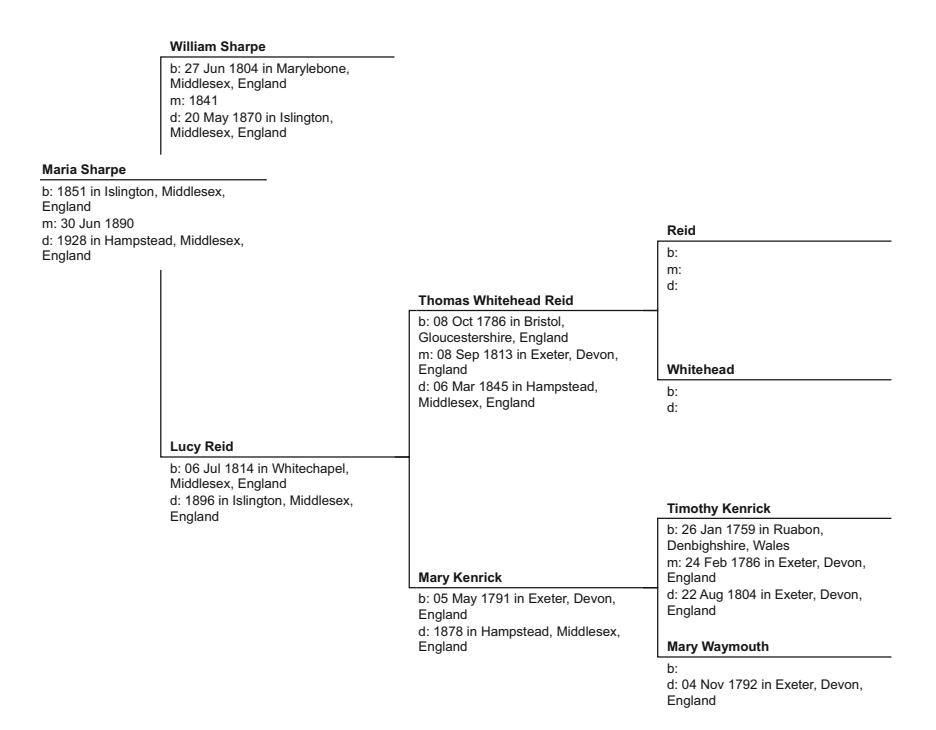

| Fig. 10.1 | Th e ancestry of Maria Sharpe | 103 |

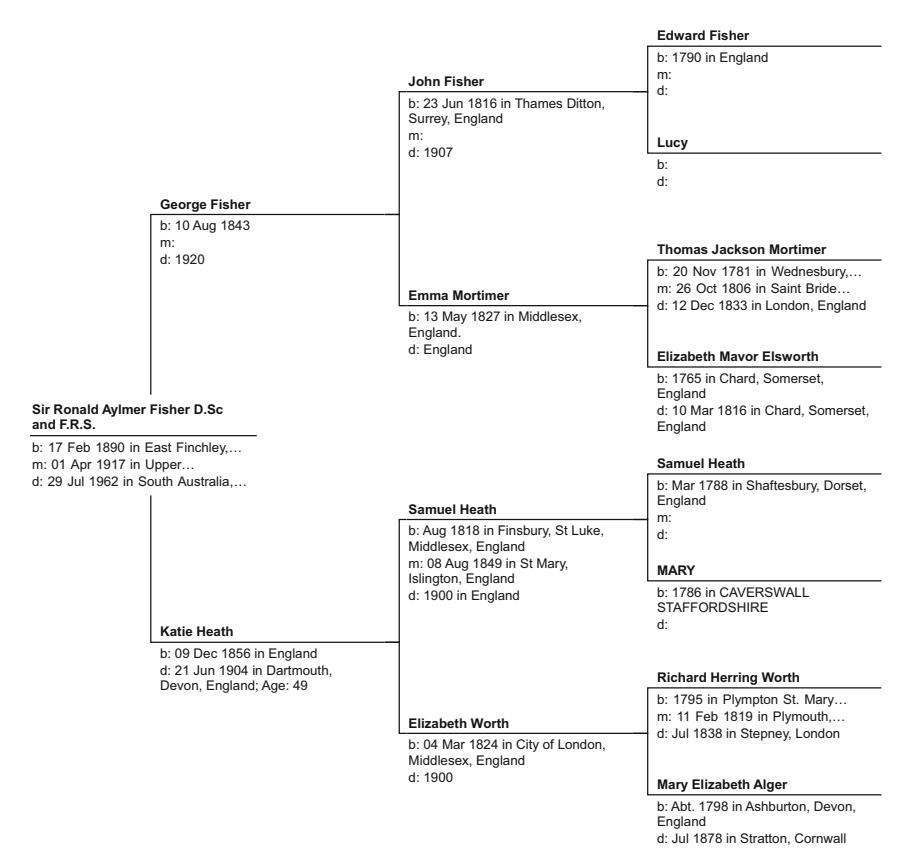

| Fig. 11.1 | Th e ancestry of Ronald Fisher | 113 |

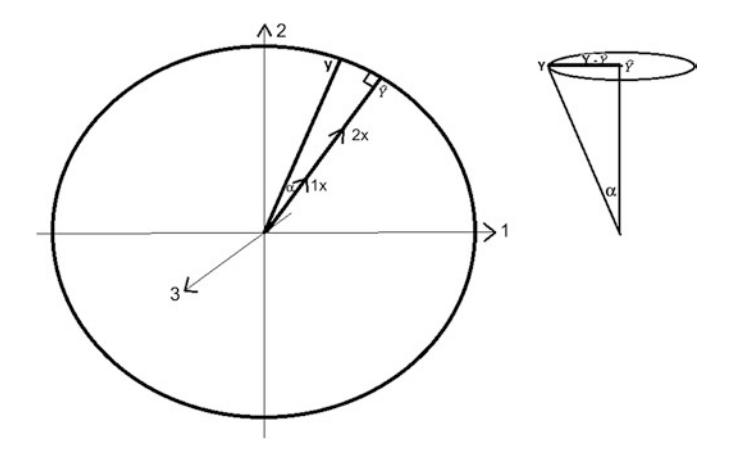

| Fig. 13.1 | Th e predicted cone of a hypothesis test | 131 |

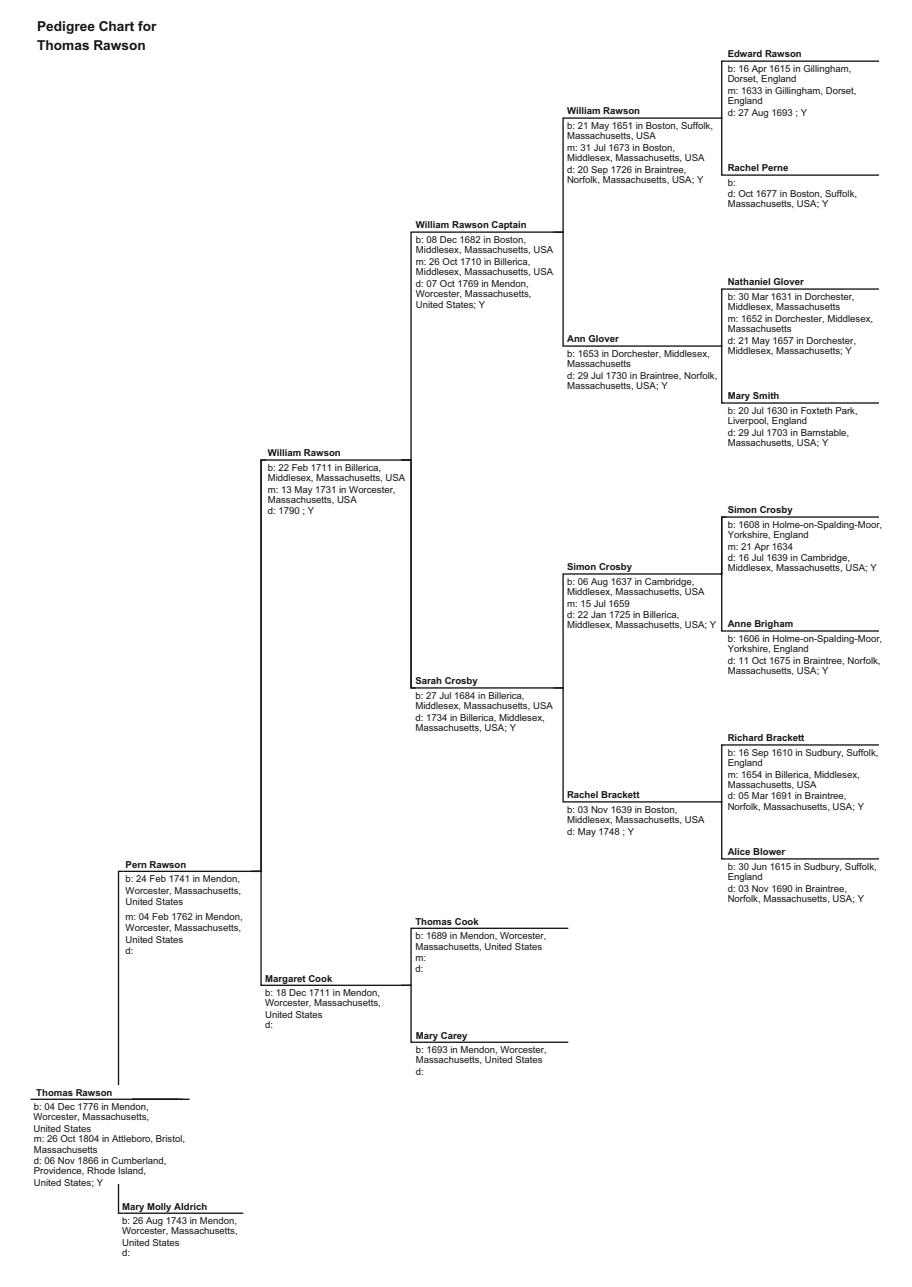

| Fig. 15.1 | Th e ancestry of the Rawson family | 151 |

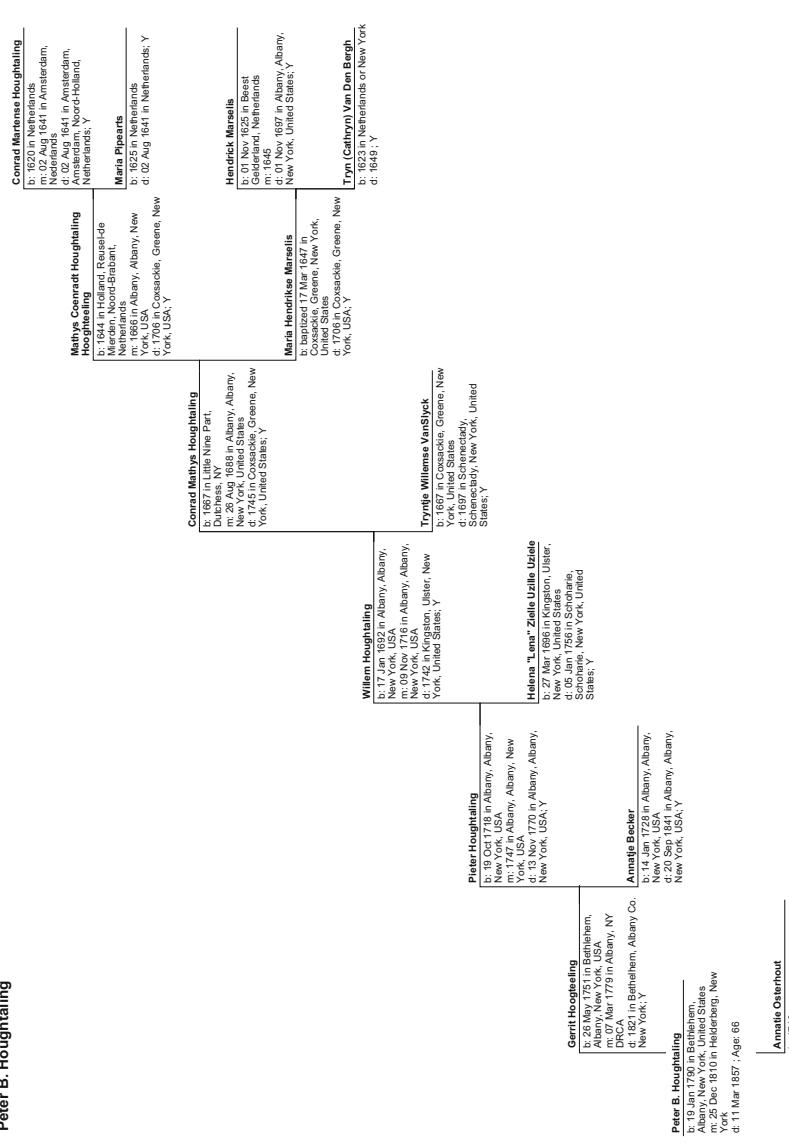

| Fig. 15.2 | Th e distant ancestry of Harold Hotelling | 153 |

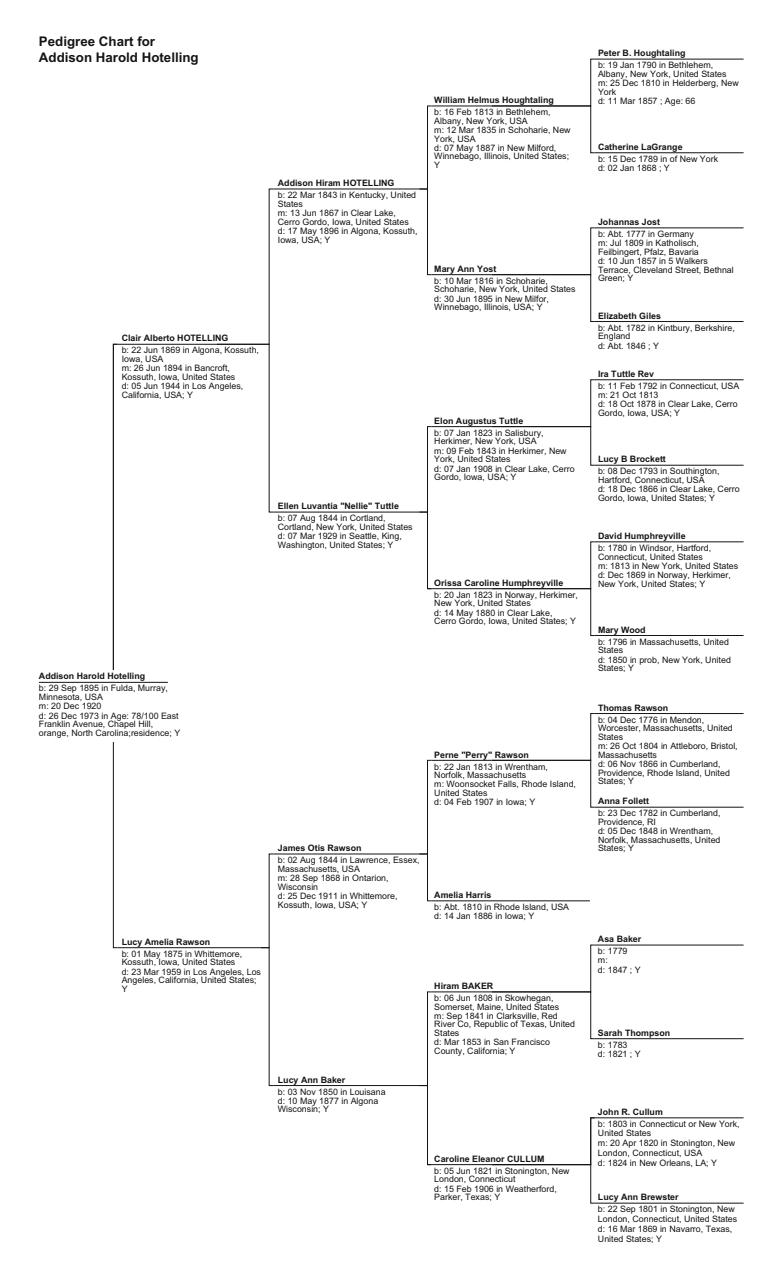

| Fig. 15.3 | Th e immediate ancestry of Harold Hotelling | 156 |

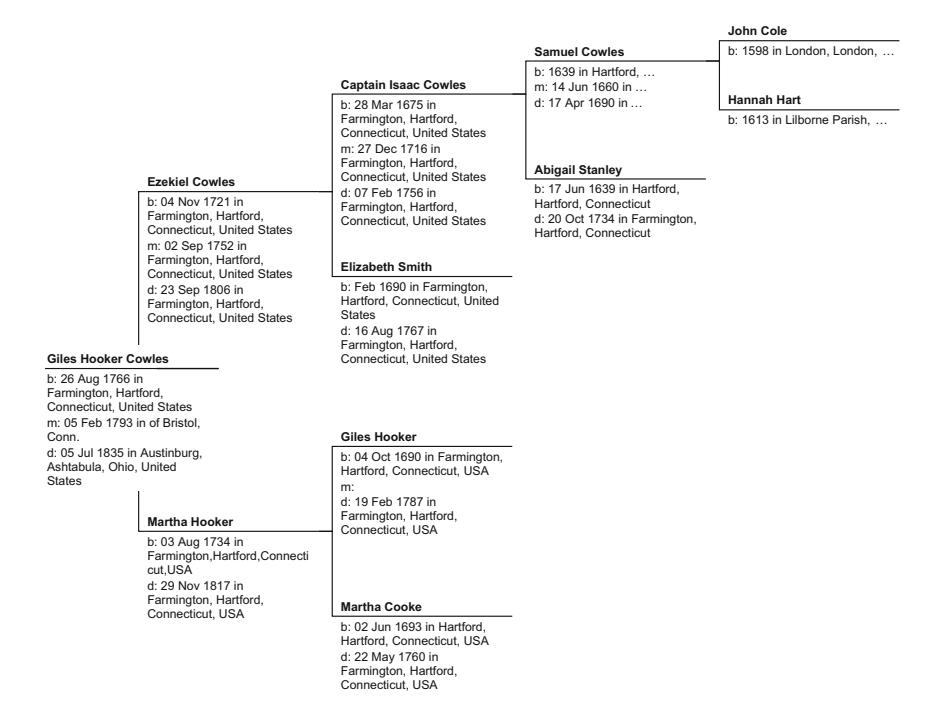

| Fig. 19.1 | Distant ancestry of Alfred Cowles III | 178 |

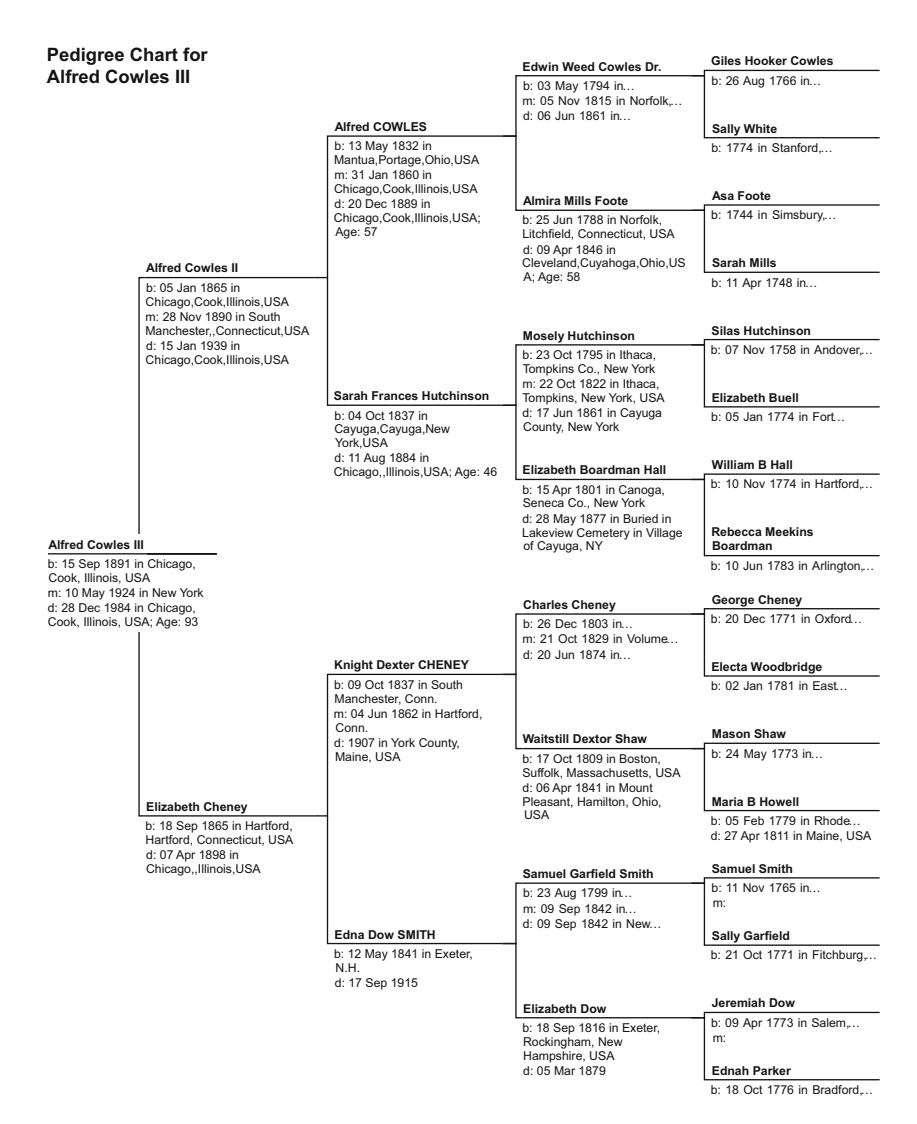

| Fig. 19.2 | Immediate ancestry of Alfred Cowles III | 180 |

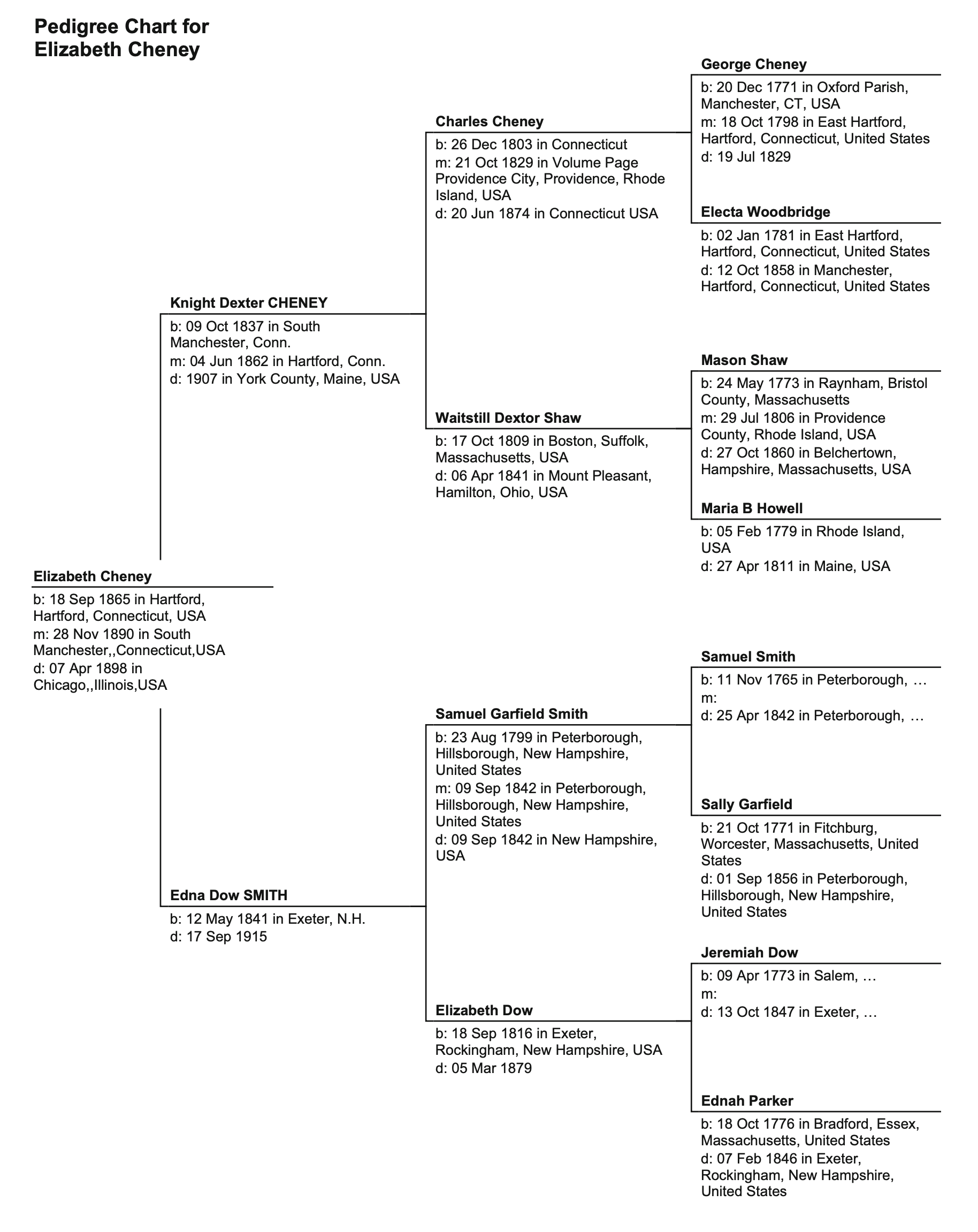

| Fig. 19.3 | Ancestry of Elizabeth Cheney | 184 |

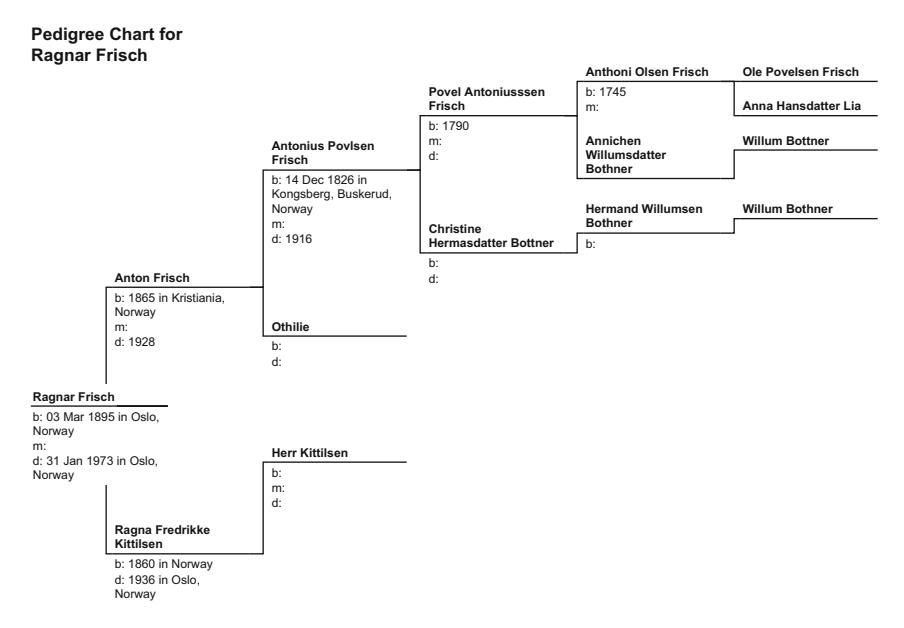

| Fig. 23.1 | Ancestry of Ragnar Frisch | 202 |

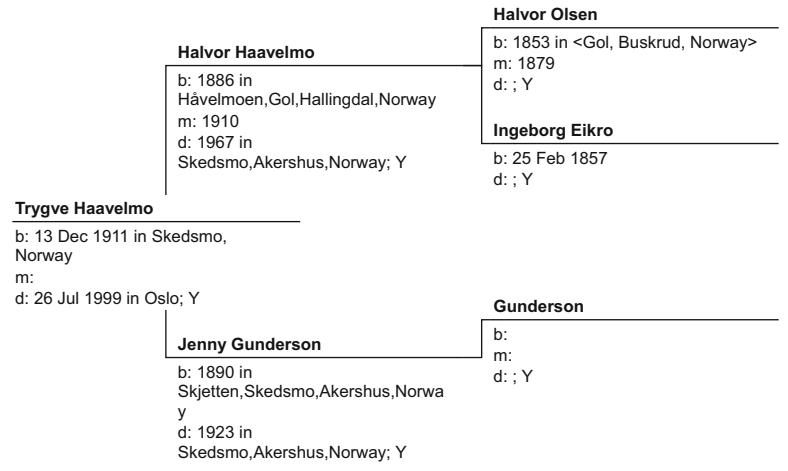

| Fig. 27.1 | Ancestry of the Haavelmo family | 224 |

Part 1: Mathematicians and Astronomers

We begin with the struggle of some great mathematicians who wrestled fi rst with an understanding of problems that concerned their gambling patrons, and then with ways their understanding of probability could be used to better predict the movement of the planets.

Th ese explorations over the seventeenth and eighteenth centuries eventually allowed a very young nineteenth-century theorist to translate the insights of those who came earlier with a discovery that revolutionized almost every aspect of science, including fi nance and statistics. Perhaps what is most surprising, though, is that the genius of Carl Friedrich Gauss came from the most humble of beginnings.

1. The Early Life of Carl Friedrich Gauss

Th ere is perhaps no discipline that is so intrinsically tied to data than the study of fi nance. Every fi nancial theory is formulated not for some esoteric purpose, but rather to better understand future occurrences based on past information. Th is world of fi nancial data is so broad that it makes little sense unless it can be simplifi ed and represented by a few familiar measures. Our models then incorporate these measures to predict movements in fi nancial variables. Th is problem is not unlike the challenge of those who gazed at the planets and stars and tried to predict their motion. One such mathematical explorer enjoyed more success in challenging predictions than any other. His surprisingly humble upbringing almost defi es his incredible insights and contributions to dozens of sciences since, fi nance included.

Th e circle of academics was an extremely small one before the twentieth century. Th ere was no public education, and hence little opportunity for higher education, except for the noble and elite. Nor was science so technical then that it commanded extensive knowledge, or large teams devoted to research. Indeed, intellectual discovery was a luxury supported by family wealth and royal courts which might sponsor one or two such men of ideas.

Th ese prodigies were viewed more as intellectual athletes and mystics. Th ey would be pitted against each other to demonstrate their intellectual cunning and lend pride to their patrons. Th ese were not the circles in which a humble boy of modest means and upbringing could fi nd himself.

Carl Friedrich Gauss was an exception. He also became one of the most exceptional mathematicians of all time, who is spoken in the same breath only with Euclid and Newton .

Gauss came from a family of farmers and laborers. His great-greatgrandfather, Hans Gauss (c.1600–?), was born in Hanover, Germany, and had found his way to Wendeburg, a small farming village in the neighboring district of Peine of Germany’s Lower Saxony region. He had a family of small size in this era: a wife, two sons and four daughters. At fi rst, his family and progeny remained close to home.

His son Henrich Gauss (1 December 1648–25 October 1726) was born in Wendeburg. He married three times. Th e fi rst marriage resulted in a dowry of a farm that had belonged to his fi rst wife, Anna Grove, a widow, in nearby Volkenrode, less than six kilometers to the southeast of Wendeburg (Fig. 1.1 ).

Henrich had a dozen children, four from each marriage, and was left a widower from each of his fi rst two marriages. His last marriage resulted in a son Jurgen.

Jurgen Gauss (or Goos) (3 November 1712–5 July 1774) was born in Volkenrode and grew up tending the farm. However, as one of the last of a dozen children, there would be no room for him on the farm once he became an adult. Instead, he moved to the large German city of Braunschweig, or Brunswiek in Low German, Brunswick in English, at the extreme southern port of the Oker River as it makes its way toward the North Sea. At the time, Brunswick was a major economic and cultural center for Germany. It was also a center for education.

Jurgen Gauss arrived in Brunswick late in the decade of the 1730s, with a new bride, Katharine Magdalene Eggling (5 March 1713–3 April 1774), the daughter of Hans Heinrich Eggling (1667–25 December 1714) and Cathrine Heuer. Soon upon his acceptance as a resident of Brunswick, he took his fi rst job off of the farm, on 23 January 1739. His adopted city fi rst employed him as a day laborer, then as a clay mason and a butcher. Each of the latter occupations entitled Jurgen membership

Fig. 1.1 The ancestry of Carl Friedrich Gauss

to guilds, and all would provide him with employment through the four seasons. Th e family enjoyed a level of urban economic comfort not readily aff orded to the youngest child in a farming family.

Jurgen Gauss and Katharine soon secured a small and narrow house for his family, at 10 Ritterbrunnen. Th ey lived in that home for 14 years, and raised four children there, including an eldest son, Gebhard Dietrich Gauss (13 February 1744–14 April 1808). Th e family then moved to a larger home at 30 Wilhelmstrasse, where Jurgen would die of tuberculosis on 5 July 1774, just three months and two days after the death of his wife from a prolonged fever.

By the time his father died, the second child and the eldest son, Gebhard, had worked and learned the family trades. Upon his parents’ death, Gebhard used a dowry from his fi rst wife, Dorothea Emerenzia Warnecke, and a loan from the town’s mayor Wilmerding, to buy out the shares of his family home from his brothers Johanne Franz Heinrich and Peter Heinrich. By the age of 30, Gebhard was able to provide a home and a secure but not affl uent living for his own family.

Gebhard’s fi rst wife did not long enjoy the house, though. She died on 5 September 1775 of tuberculosis as had her father-in-law, but not before giving birth to a boy. Johann Georg Heinrich was born on 14 January 1769.

Seven months after Dorothea Warnecke died, Gebhard married Dorothea Benze, the daughter of a stonemason, Christophe Benze (2 March 1717–1 September 1748). Dorothea’s father died prematurely as well, from pulmonary respiratory illness associated with his profession as a stonemason. He also left a son, Johann Friedrich. Both of Christophe Benze’s children were thoughtful and intelligent, but neither enjoyed the luxury of formal schooling.

Dorothea was illiterate, but she was a kind and nurturing woman by nature. She worked as a maid before she married Gebhard. While Gebhard was also uneducated, he nonetheless managed to be appointed the city’s master of waterworks as he was an experienced stonemason and was reasonably good with sums.

In contrast to his wife’s gentle nature, Gebhard was quite domineering as a father, and was considered rather somewhat uncouth. Yet, he provided reasonably well for his family.

The Arrival of Carl Gauss

Gebhard and his second wife had a son just over a year after their marriage, on 25 April 1776. Carl Friedrich was born in the family home in Brunswick on 30 April 1777, on the Wednesday eight days before the Ascension. He was an only child of the second marriage, but was a half brother to Gebhard’s eldest son Johann.

When a young Carl once quizzed his mother about his birthdate, she could not recall the exact date. Later in life, Carl was able to calculate it based on his mother’s recollection of his birth before the Ascension. At a young age, he used this small family mystery as an opportunity to develop a formula for the day Easter arose for any given year. It did not take long for his family to discover that the young Gauss was a mathematical prodigy.

Carl had great aff ection for his mother, and for her brother, his uncle Johann Friedrich, but had a somewhat awkward relationship with his half brother. Indeed, he did not know his brother Johann Georg very well. More than eight years his senior, Johann struck out on his own as a day laborer before Carl’s tenth birthday. Johann returned home some years later, but an eye injury made him only of limited help to his father. Instead, Johann enlisted in the army for almost a decade, and returned to his family home to take over his father’s trade once his father died on 14 April 1808.

While Carl Friedrich grew up with a harsh and domineering father, he enjoyed the better nature of his kind and devoted mother. In turn, he doted on his mother all his life, until Dorothea’s death at the remarkably advanced age of 97, even through her infi rmities and her affl iction with blindness in her last four years.

Carl harbored fond and vivid memories of his childhood. One of his earliest memories was falling into the river and being saved at a very young age. Th is terrifying memory did not taint his recollections otherwise, though.

He also recalled that he taught himself to read by asking his family members how to pronounce letters on the page. His ability to manipulate numbers became a favorite parlor trick among family friends. When his father would pay his bricklaying workers, a three-year-old Carl once impudently but accurately corrected his father’s calculations. While access to school was out of the question for many children in his neighborhood, his intellectual precociousness compelled his family to consider his education.

In 1784, when young Carl was seven years of age, his father agreed to let him enter nearby St. Katharine’s Volksschule, a people’s school for the more motivated of the children of non-prosperous families. Th e school, which adjoined St. Katharine’s Church, had a teacher named J.G. Buttner who oversaw a dark and dank classroom of 200 children.

Young Gauss endured two years among those 200 classmates, but managed to stand out nonetheless. When the teacher gave his students an assignment he felt would keep them occupied for hours, Gauss almost immediately announced the solution. Th e teacher felt it impossible that Gauss could have so quickly added all the numbers from 1 to 100. Yet, an eight-year-old Gauss reasoned his way to a solution.

Gauss had noticed that the fi rst and last number in the sequence, 1 and 100, added to 101. So did the second and the second to last, 2 and 99, and, indeed, so do all 50 such pairs. Gauss proclaimed that 50 pairs which each add each to 101 must then total 5050. While he may not have been praised for his brilliance, he was at least spared the whip that often accompanied the incorrect answers from lesser classmates.

Headmaster Buttner quickly realized Gauss needed a more sophisticated curriculum. He ordered an advanced textbook for the boy, but professed that there was little he could teach the young prodigy. Fortunately, word got out in Brunswick about the boy wonder. Carl’s father relented on the headmaster’s admonishments that Carl spend the evening studying rather than completing chores, and allowed the neighbor’s boy, Johann Christian Martin Bartels (1769–1836), eight years’ Carl’s senior, and with a strong interest and competency in mathematics, to supplement Carl’s education. By lamplight, Carl learned mathematics together with the much older boy, and kept pace as Bartels absorbed such concepts as the binomial theorem and infi nite series.

Meanwhile, Bartels worked to fi nd a patron for Carl as he pursued his own studies in mathematics. His Collegium teacher, Eberhard August Wilhelm Zimmermann (17 August 1743–4 July 1815), had studied mathematics at the prestigious Göttingen University and was instructing mathematics at the Collegium Carolinum in Brunswick. When Bartels began classes with Zimmerman , he brought the abilities of the 11-yearold Carl Gauss to his professor’s attention. By then, Zimmermann was well respected by the local nobility, and had already been awarded the distinction of Councilor in the region. Seven years later, he was further bestowed a noble title by Duke Karl hette Wilhelm Ferdinand.

Zimmerman accepted the challenge of instructing the young prodigy. Carl Gauss was given the run of the Collegium, despite his young age, and spent almost all his free time at the school.

A few years later, the Duchess Ferdinand came across the young boy reading a book that seemed most advanced for his age. Astonished, she quizzed him on his studies, and was impressed by his knowledge of the classics, of literature and of mathematics.

At the Duchess’ behest, the Duke sent an aide to fetch young Gauss . Th e aide fi rst demanded that Carl’s older brother accompany him to the palace, but Johann Georg insisted it was for his young half brother Carl for whom the Duke beckoned.

Th e Duke took under his wing a young working-class boy, one with rough working-class edges who spoke Low German. Despite these social handicaps, the Duke funded Carl’s regular attendance at the Collegium Carolinum in 1792. Only 15 years old, Carl was already more intellectually advanced than most of the other students. In addition to the annual stipend the Duke paid on behalf of Carl, the Duke also off ered Carl’s teacher Zimmerman expenses to oversee his education, for as long as Gauss attended the local college.

Young Carl studied at the Collegium Carolinum for four years. He was exposed to the Classics, to Greek and Latin and to the mathematics of Sir Isaac Newton (25 December 1642–20 March 1726), Leonard Euler (15 April 1707–18 September 1783) and Joseph-Louis Lagrange (25 January 1736–10 April 1813). By his last year at the college, Gauss had become intellectually captivated by the astronomical explorations of Newton, and had even developed his method of least squares to tease trends from cosmological observations subject to random errors. While also still a teenager at the College, Gauss also became intrigued with prime numbers, a fascination he would continue soon upon his matriculation to the University of Göttingen.

It was at the Collegium that Carl also developed the habit of intellectual innovation primarily for his own curiosity’s sake. He only occasionally felt the need to aggregate his ideas into papers, even though he soon realized the need to record his results. He meticulously began to document his mathematical innovations in a series of dated notebook entries that he kept for his entire life.

On 21 August 1795, when Carl was 18 years old, the Duke ordered an increase in the stipend to be paid to Carl so that Carl could begin attending at the University. Th is sum covered tuition and all living expenses. On 11 October, Gauss left his hometown for the 200-kilometer trip to Göttingen, one of the nation’s most prestigious and accomplished universities. Four days after departing Brunswick, Gauss arrived and was admitted as a mathematics student to Göttingen. Carl chose the university because of its extensive mathematics library. He chose well and remained the pride of Brunswick forever after.

Th e next spring, on 30 March 1796, Gauss published notice of his fi rst major discovery. He recorded that he found a constructible solution to the 17-sided regular polygon problem that had perplexed mathematicians for 2000 years. He later recalled how he awoke from a vacation in Brunswick with the realization of a solution to the problem ( zp −1)/( z −1) = 0, which led to the solution to the constructible 17-gon.

Upon his discovery, Gauss published a notice of his result so that he may lay prior claim within the scientifi c community. Th is he had done rarely in his life because, for him, his exercise of mathematics was to satisfy his own intellectual curiosity and solve his practical problems in astronomy and geodesy. Not always would he fully fl esh out his ideas into a publishable form because, for him, completing the proof was either obvious or unnecessary. And publication was expensive in both time and money. Indeed, it would take Gauss almost a decade to publish the proof of his result on the 17-gon. Th is pattern repeated itself many times over his life. Almost invariably, his briefest assertion of myriad amazing discoveries stood the test of more rigorous treatment, often at the hands of others, and generally requiring hundreds of pages of advanced mathematics, and many decades later.

What is apparent in Gauss ’ brilliance at an early age is that his life was anything but conventional. Th at may be the source of his brilliance. While lesser children of more affl uent parents would enjoy a lifetime of forced stimulation through the minds of tutors and professors, they must always glimpse the world through someone else’s eyes. Gauss knew no such world, nor any such preconceptions. He conceived his intellectual world anew, perhaps because he was often “taught” by those less accomplished and talented than he was. His learning defi ed conventional wisdom. Th is allowed him to look at fundamental problems without any blinders or preconceptions and, for that matter, without anybody telling him his brilliant and unique approaches could not work because, after all, no great mind before him had solved the same problem. His scholarly bravado and courage to take on and solve a two-millennia-old problem, and, in doing so, unite and perhaps even invent three branches of mathematics, can be credited to his upbringing and lack of academic pedigree.

2. The Times of Carl Friedrich Gauss

Gauss loved numbers. When he imagined geometric concepts and from them developed what we would now call abstract algebra, he came to shapes from the perspective that these new approaches would help him better understand the nature of numbers. Ever since his elementary school experience in which he successfully solved a problem for his teacher based on the application of a numerical series, Gauss had numbers, integers and shapes racing in his head. While he was already publishing academic papers of high quality at the age of 18, Gauss had been interested in arithmetic and geometric means for 4 years by then, and, by 17, had explored the representation of average values through power series, and the method of least squares. Gauss developed these concepts from a position of great practicality. He used numbers, and especially their patterns, to better understand practical problems. His choice of study at the University of Göttingen was an ideal match for this intellectual curiosity.

The University of Göttingen, or, in German, Georg-August-Universität Göttingen, GAU for short, is one of Germany’s most prestigious establishments for higher education. It was founded in 1734 by King George II of Great Britain, who was also the Elector of the Kingdom of Hanover. Göttingen began classes in 1737. The quality of the institution has placed its host city at the center of learning in Germany ever since. The brilliant mathematicians Bernhard Riemann (17 September 1826–20 July 1866), David Hilbert (23 January 1862–14 February 1943), Peter Gustav Lejeune Dirichlet (13 February 1805–5 May 1859) and John von Neumann (28 December 1903–8 February 1957), and great physicists such as Max Born (11 December 1882–5 January 1970), Julius Robert Oppenheimer (22 April 1904–18 February 1967), Max Planck (23 April 1858–4 October 1947), Werner Karl Heisenberg (5 December 1901–1 February 1976), Enrico Fermi (29 September 1901–28 November 1954) and Wolfgang Pauli (25 April 1900–15 December 1958) all studied or taught there. So did the international banker and financier John Pierpont “J.P.” Morgan (17 April 1837–31 March 1913). It was also the notorious epicenter of Adolf Hitler’s (20 April 1889–30 April 1945) Great Purge of Jewish academics in 1933. Göttingen was an intellectual capital unparalleled in the celebration of abstract thought since the early 1800s, or perhaps since Carl Friedrich Gauss first studied there.

Those accepted to study at Göttingen were invariably gifted. But, few came from such modest means as had Gauss, nor with such wealthy patrons as Duke Ferdinand. When Gauss was admitted at Göttingen on 15 October 1795,1 at the age of 18, he did not yet know whether he wished to study mathematics or philology. Gauss loved words almost as much as he knew numbers.

Philology was a classical and well-appreciated discipline in the nineteenth century that divined language from the historical written record. Philologists were Renaissance scholars who used their skills in history, linguistics and literary criticism to solve literary and historical puzzles. It was at that time a foundation for what might more broadly be described as the humanities today. The discipline’s goal to solve historical and literary puzzles played to Gauss’ curiosity in the same way as numbers did, and drew upon Gauss’ extensive knowledge of many languages as had his ability to draw upon many methodologies in mathematics.

Fortunately for modern science, mathematics won Gauss’ attention, partly because the patronage he enjoyed allowed him to be less concerned about tuition and eventual salaries, and more receptive to the study of science, with all its economic impracticality. During his first year of study, he mostly read books from the renowned Göttingen library on the humanities, on philology and on travel. But, that first year sparked a passion in mathematics when Gauss managed to devise his first solution to a then intractable mathematical problem. He had become fascinated in the problem of dividing a circle into 17 parts through the creation of a 17-sided polygon constructed only with Euclidean tools.

It is instructive to explore Gauss’ path through the construction of his problem and solution as it tells us much about the brilliance of this 18 year old, on the development of the new field of complex algebra ever since, and on the way Gauss thought, in geometric terms that would become the hallmark of the greatest minds of statistics and econometrics.

The Greeks before the birth of Jesus Christ had been fascinated with the geometries that could be constructed with a simple compass and straightedge. Indeed, these early geometers had no number system yet. These tools of the compass and straightedge were what Euclid (about 300 BC) employed to construct regular polygons. These regular polygons have angles between apexes that are all equal, and sides that are the same length, such as triangles, squares, hexagons and octagons.

The Greeks were fascinated by the properties of such polygons that were contained within a circle. By calculating the area of regular polygons of ever increasing number of sides, they could even approximate the area of a circle and the number pi with great accuracy.

The Greeks quickly discovered that they could easily construct such polygons with an even number of sides by forming isosceles triangles with two equal sides in the space from the center of the circle to its circumference. They could easily construct even-number-sided polygons within the unit circle by further subdividing known polygons with an even number of sides. Such exercises allowed the Greeks to construct extensive proofs of the properties of lines, circles, triangles, squares and octagons.

While they did not actually develop a number system from the length of the sides of these polygons, they were clearly dabbling in number theory. We are reminded of this geometric interpretation when we think of raising a number to the power of two as squaring the number. We can now see these Greek geometers were on the verge of discovering algebra, polynomials, roots of polynomials, negative numbers and imaginary numbers. But, that leap in understanding would take almost two millennia to solve.

For instance, consider the area of a square drawn from the apex F of a semicircle of width AX and height AF = AX, and hence of area AX*AX. The Greeks denoted this as the geometric mean of the lengths AX and AB (Fig. 2.1):

If we denote the length of the longer ray AB as a, the length of the distance between X and B as x, and the length of the shorter rays AF, or AX as b = a−x, then a/x = x/(a−x). The length x is then the geometric mean of a and a−x, and is also the root to the polynomial obtained from equating the ratios a/x and x/(a−x). Cross-multiplying, we can express these ratios as x2 −a(a−x). Then:

x is the root of \[x^2 + ax - a^2 = 0\] .

This value of x, now more commonly expressed as the square root of the product of two numbers a and b, was generated by the Greeks using only the geometric comparisons of sides of polygons. In doing so, the Greeks were solving the roots to common polynomials using geometric analogues, but not yet with a formal algebra.

Fig. 2.1 The calculation of geometric means

The ancient Greeks had learned to construct various polygons from the square, and the series of even-sided polygons that were multiples of the four-sided figure and its successors. They could also do the same for the triangle and its even-numbered multiples. Their first challenge, though, was to form a pentagon.

Such a five-sided polygon had mystic charms, as had its relative, the five-sided star. The pentagram was the mystic symbol of the Pythagorean brotherhood. The Greeks showed that the length of the sides of such regular polygons enclosed in a circle of unit radius could be expressed with ratios of integers and their square roots (or geometric means). For instance, a pentagon within a circle can be constructed as five identical equilateral polygons much like five pieces of an evenly cut pie, with the round edges “squared-off.” Such polygons might look as in Fig. 2.2.

We learn in high school trigonometry class that if we divide the 360° of the circle into five identical parts of 72° each, then the width of the first such triangle enclosed in a unit circle is represented by the distance from the origin to the point A, or cos(360°/5) = cos(72°) = ( ) 5 1 - / . 4

The Greeks knew how to construct a unit circle with a compass, and they could find the length of the square root of 5 by observing that its value was simply the geometric mean between 5 and 1. In fact, the Greeks were able to show that they could use only a straightedge and compass, or, equivalently, with the tools of addition, subtraction, ratios and square roots, formed from the congruencies in triangles and formu-

Fig. 2.2 The pentagon in a unit circle

lation of geometric means, to construct polygons with n sides for n = 3, 4, 5, 6, 10 and 15, and, of course, 8, 12 and 16 that follow naturally from the square, the hexagon and the octagon. This realization became a fourth assertion in addition to the Greek’s three famous problems of trisecting an angle, doubling a cube and squaring the circle. The latter problem required the creation of a square with the same area as a given circle.

At the age of 18, Gauss proved that the first two of these assertions of the three famous problems are impossible using only a compass and straightedge. He also showed which n-sided regular polygons was constructible, that is, they could be constructed only with a compass and straightedge, or, equivalently, with sides of a length that are the sum only of ratios of integers or square roots (geometric means). Gauss determined that constructability could occur only if the number of sides is an integer prime number that can be expressed as 2m + 1, where m is an integer.

Had the Greeks known Gauss’ insight, the world would have been spared many person-years trying to construct 7-, 11- and 13-sided polygons over the intervening two millennia. The smallest constructible polygon that remained unconstructed by the Greeks or by those who followed for more than two millennia was the 17-sided heptadecagon—until an 18-year-old Gauss proved its construction, and, in turn, created a new and incredibly important way to look at the correspondence between polygons and the number system.

Gauss’ solution came in a flash of insight. He showed 7-, 11- and 13-sided regular polygons could not be constructed, and demonstrated the constructability of the 17-sided regular polygon. In doing so, he created whole new methods of mathematical analysis without which many of our most profound technological achievements today would have been impossible.

His shear excitement at his discovery also induced him to dedicate his life to the study of mathematics, as opposed to his competing interests in philology and the classics.

Gauss’ original insight was providential. He had realized that the problems the Greeks wrestled with could often be expressed as roots to equations of the form (xp −1)/(x−1) = 0. This family of problems, for various values of p, had intrigued scholars for a century. But, just as Albert Einstein (14 March 1879–18 April 1955) had looked at the problem which perplexed Ludvig Lorenz (18 January 1829–9 June 1891), and Albert Abraham Michelson (19 December 1852–9 May 1931) and Edward Williams Morley (29 January 1838–24 February 1923), among others, and, by casting the nature of the space-time relationship in a different light, completely recast classical physics, Gauss awoke one morning while on holiday in Brunswick with a solution in mind to an equally baffling problem. How he dealt with his first important discovery became the template for his mathematical pragmatism over a lifetime. And his often nonchalant confidence and intellectual dismissiveness that followed also shed light on why some attribute to others the legitimate discoveries he had made.

The Creation of Complex Numbers

The pieces of this first puzzle a teenage Gauss solved were contemplated well before he recast the problem so successfully. While the Greeks had not developed a full-fledged real number system that included irrational numbers, they were adept at geometrical constructs. While the real number system would take some time to be fully fleshed out, even the real number system could not solve Gauss’ problem, though.

The followers of Pythagoras believed that all numbers should be either positive whole numbers. Associated with such natural numbers is the physical analogue of length. The Pythagoreans of the fifth century BC also admitted the rationals that could be represented as the ratio of whole numbers. This created a problem and some arithmetic heresy for the Pythagoreans.

Consider the right isosceles triangle with two sides of equal length q and a hypotenuse of length p. Then, we recall from Pythagoras’ theorem that p2 = 2q2 . Could the ratio p/q of the hypotenuse to one side of the triangle be represented by a ratio of the two smallest whole numbers that share no common factors? (See Fig. 2.3.)

The Pythagoreans relied on geometry in such proofs. At that time, it was arithmetic heresy to conclude the existence of an irrational number that could not be expressed as the ratio of two whole numbers.

Fig. 2.3 An isosceles triangle of hypotenuse p and adjacent and opposite sides q

Here is the algebraically heretical dilemma. If the length of one side q were an odd number, then twice its square must be even. Hence p2 must be even as well. This implies that p itself must be even since an odd number squared is always odd. Yet, if p is even, it could be represented as 2r, which implies 4r2 = 2q2 , or 2r2 = q2 . Then, q must be even. However, p and q cannot both be even if their ratio p/q has no common factors.

Some intellectually daring Pythagoreans realized this contradiction. An isosceles triangle with unit sides q = 1 must have a hypotenuse of a length p that is the square root of 2. While the Pythagoreans who realized this number must be an irrational violated the brotherhood, they nonetheless admitted the extension of the number system to the irrational roots for some of the integers 3, 5, 6, 7, 8, 10, 11, 12, 13, 14, 15, all the way to the whole number 17.

There remained two other aspects of the number system that the Pythagoreans believed unimaginable. These were the existence of negative and imaginary numbers. Until 1545, no European mathematician had postulated, or had the courage to postulate, their existence. The more complex of these two concepts, the imaginary numbers were actually described first.

Some had come tantalizingly close to discovering imaginary numbers. While Europe was immersed in its Dark Ages, the scholars of Arabia were the princes of science of their day. In Baghdad in the early ninth century, the caliph al-Ma’mun was the patron of a group of learned men known as the House of Wisdom. Al-Khwarizmi (780–850) had developed solutions to quadratic equations but restricted his solutions to those that yielded positive numbers. The negative and imaginary roots for which we are accustomed in high school were discarded as nonsensical.

Three centuries later, the Latin translation by Gerard of Cremona (1114–87) of al-Khwarizmi’s Algebra came to the attention of Leonardo da Pisa (1170–1250). Leonardo was asked to determine the roots of a simple cubic equation x3 + 2x2 +10x = 20. This is a specific version of the general form x3 + ax2 + bx + c = 0, which can be shown to be reducible to a simpler equation:

\[z^3 + pz + q = 0,\]

through a change of variables in which z = x + a/3. If we restrict the parameters and solution to positive numbers, there are three possible versions of the equation to solve. A professor of Arithmetic and Geometry at the University of Bologna, Scipione del Ferro (6 February 1465–5 November 1526) discovered how to solve these three versions, now known as the depressed cubic equation.

In that era, professors held an almost mythical reputation. Their cachet was to be able to discover solutions to problems posed by other professors. These challenges and defenses earned the successful solvers their professorships. Hence, these academic mystics often held close their solution methods. Meanwhile, their patrons considered these scientific mystics exclusive property of their royal courts.

Del Ferro took his secret solutions to his grave in 1526. A notebook that recorded his secrets was inherited by his daughter, Filippa, and her husband Hannival Nave, his former student who assumed del Ferro’s position at the university upon his death.

Scipione del Ferro had confided his secret solution to another one of his students though, named Antonio Maria Fiore. With del Ferro’s insights in hand, Fiore challenged another mathematician, Niccolò Tartaglia (1499–13 December 1557), to a contest to solve a set of cubic equations. Having heard rumors of the existence of a solution to the cubic equation, Tartaglia accepted the challenge and set out to discover a general solution. Indeed, he discovered a more general solution to any cubic equation, while his challenger had in possession only a solution to a particular set of cubic equations. In the contest, which lasted only a couple of hours, Tartaglia was able to solve all 30 of the problems posed to him, while Fiore was unable to solve any of the more general cubic equations Tartaglia had posed.

Gerolamo Cardano (24 September 1501–21 September 1576), one of the three greatest scientific minds of the pre-Renaissance period, had heard of Tartaglia’s triumph and invited Tartaglia to visit Milan under the premise that he had arranged for Tartaglia a patron to fund his work. Instead, upon his arrival in Milan, Tartaglia was asked to reveal to Cardano the solution so Cardano could include it in his forthcoming mathematical treatise. Tartaglia obliged, under the promise that Cardano would not publish his own work until Tartaglia was afforded an opportunity to publish the general solution to cubic equations.

With this tantalizing solution at hand, Cardano went on to further extend and generalize the solution. Once he discovered that it was actually Scipione del Ferro who first discovered a restricted solution, Cardano felt freed from his promise to Tartaglia and included his innovative solution to the general cubic equation in the treatise Ars Magna.

In his treatise, Cardano established a number of principles that would prove useful to Gauss almost three centuries later. First, he demonstrated in Chapter One of his book that equations can have multiple roots. To then, some roots of equations were ignored as impossible because they yielded nonsensical numbers less than zero. For instance, the roots of x2 − 1 are +1 and −1, but contemporaries rejected the notion of a number less than nothing.

Second, Cardano postulated in his Chapter XXXVII the existence of imaginary numbers and complex numbers. He posed the question similar to the following: Find two numbers that sum to two, but for which the product is also two. The correct answer is 1 1 + - and 1 1 - - . He admitted that this expression had no physical significance, but he nonetheless proceeded to explore the implications of such complex numbers.

Cardano’s two mathematical taboos actually both seemed to defy Pythagorean common sense. Numbers were to represent physical quantities one could grasp, literally and physically. One cannot hold something of negative weight nor measure something of negative length. In 1637, René Descartes (March 1596–11 February 1650), the father of modern philosophy, for whom the Cartesian coordinate system was named, published his Discours de la méthode pour bien conduire sa raison, et chercher la vérité dans les sciences. In his Discourse on the Method of Rightly Conducting One’s Reason and of Seeking Truth in the Sciences, he solved the equation x3 − ax + b2 when a and b2 are both positive. He noted that “For any equation one can imagine as many roots as is degree would suggest, but, in many cases no quantity exists which corresponds to what one imagines.”2 Roots that invoke the square root of a negative number were labeled imaginary. Hence, the square root of −1 is designated as “i.”

It took almost another 50 years for someone to propose a physical interpretation of negative numbers. In 1685, John Wallis (23 November 1616–28 October 1703), the English mathematician, the chief mapmaker and cryptographer for the British Parliament, published his book Algebra. In his treatise, he offered an interpretation of negative numbers as corresponding to the left-hand side of a line when the zero mark was somewhere between the left and right extremes.

Indeed, a mapmaker was in a unique position to observe negative numbers, especially the mapmaker to the British Parliament. Navigators measured the 360° of longitude based on the orientation of the Sun at specified times with reference to a time standard maintained at the Royal Observatory in Greenwich, London, England. This location was defined as the zero-degree meridian. Chronometers on ships then referenced the difference between solar time and the time coordinated with Greenwich Mean Time on their chronometers to determine their longitude. A position on a map to the right of Greenwich defined a positive increase in longitude and a new solar time relative to Greenwich Mean Time when the sun reaches a defined point in the sky, while movements to the left result in a decrease, or a negative change, in longitude and solar time relative to Greenwich Mean Time. This description of positions on a line based not on a distance but on a change in distance relative to the origin naturally suggests a physical significance to negative numbers. We now know Wallis’ insight as the real number line which spans both the positive and the negative directions.

In the same year that Wallis published his Algebra, which described the role of negative numbers, an 18-year-old Abraham de Moivre (26 May 1667–27 November 1754) had sought refuge in England from the religious persecution he experienced in France. Thirteen years after his arrival, he had come to know and befriend Isaac Newton (25 December 1642–20 March 1726/7). In his conversations with Newton, he revealed to Newton an interesting result based on Descartes’ imaginary number i. De Moivre noted that:

\[\left(\cos(x) + i * \sin(x)\right)^n = \cos(nx) + i * \sin(nx).\]

The young Gauss would have knowledge of the utility of the real number line and of imaginary numbers. Like Wallis more than a century earlier with his negative numbers, Gauss was the first to offer a geometric intuition to complex numbers that created substance out of the imaginary number line many still regarded as a mathematical oddity, despite their pleasing properties.

The 18-year-old Gauss apparently did not know that the Norwegian mathematician and mapmaker Caspar Wessel (8 June 1745–25 March 1818) had also offered such a geometric interpretation just a few years earlier, in 1799. Like Wallis, Wessel was also a mapmaker who studied directions, and hence vectors. It was a natural extension to consider the real-imaginary coordinate system rather than the conventional real-real coordinate system we all observe on two-dimensional maps and in the ubiquitous x-y graphs. But, his paper, Om directionens analytiske betegning, which he presented to the Royal Danish Academy of Sciences and Letters in 1797, went largely unnoticed and untranslated until 1897.

Buried in the Danish paper was the concept we use today to add vectors. Wessel stated in his On the Analytical Representation of Direction that:

Two straight lines are added if we unite them in such a way that the second line begins where the first one ends and then pass a straight line from the first to the last point of the united lines. This line is the sum of the united lines.

Wessel also applied his notion of vectors and vector addition to the complex plane. However, it was left to Gauss to bring the concept of the complex plane to light in the academic world, and to offer a vivid geometric interpretation with powerful application ever since. He did so as an 18-year-old youth who was trying to solve a problem that perplexed mathematicians for more than two millennia.

Gauss had not set out to legitimize imaginary numbers, nor to define the complex plane. He merely discovered a practical intellectual framework that would allow him to solve a multi-millennial dilemma. Indeed, he would subsequently have discussions with others who recognized the value of the new analytic geometry he discovered in 1796. But while Gauss used his results for his own purposes, he did not publish them until 1831. It was then that he proposed the new term complex number. He described it thus:

If this subject has hitherto been considered from the wrong viewpoint and thus enveloped in mystery and surrounded by darkness, it is largely an unsuitable terminology which should be blamed. Had +1, −1 and -1 , instead of being called positive, negative and imaginary (or worse still, impossible) unity, been given the names say, of direct, inverse and lateral unity, there would hardly have been any scope for such obscurity.3

Gauss’ discovery offered the first bridge between algebra and analytic geometry. By harkening back to the Pythagorean principle that geometric figures be drawn only with a compass and a straightedge, he also reinforced his fascination with the geometric mean, a property that would prove influential in his development of the least squares methodology.

To see his insight, consider the consequence of drawing vectors and geometric figures on a complex plane. In such a representation, with the algebra first proposed by Leonard Euler a half century earlier, and the representation on the complex plane proposed by Caspar Wessel, the horizontal axis is the traditional real number line, and the vertical axis was in units of plus or minus the imaginary number i. In such a complex number plane, a given point is then described by a real part a and an imaginary part b i (Fig. 2.4).

The algebra of imaginary numbers had been fully explored by Euler and others, but the geometric interpretation was novel. The complex algebra had a number of nice properties. For instance, let’s begin with the simplest statement of exponential and trigonometric equivalency.

Recall de Moivre’s identity that was used by the great Leonhard Euler (1707–83) to subsequently determine, in 1748, that \(e^{ix} = \cos(x) + i * \sin(x)\) . The 1965 Nobel Prize-winning physicist Richard Phillips Feynman (11 May 1918–15 February 1988) labeled Euler’s formula “the most remarkable formula in mathematics.” Euler had been exploring the infinite series that represent the exponential and then the two trigonometric functions:

\[e^{x} = \sum_{n=0}^{\infty} \frac{x^{n}}{n!} = 1 + x + \frac{x^{2}}{2!} + \frac{x^{3}}{3!} + \cdots\] for all x

Fig. 2.4 The complex plane

He observed the similarity between the infinite sum above and those of the sine and cosine functions:

\[\sin x = \sum_{n=0}^{\infty} \frac{\left(-1\right)^n}{\left(2n+1\right)!} x^{2n+1} = x - \frac{x^3}{3!} + \frac{x^5}{5!} - \cdots \quad \text{for all } x\]

\[\cos x = \sum_{n=0}^{\infty} \frac{\left(-1\right)^n}{(2n)!} x^{2n} = 1 - \frac{x^2}{2!} + \frac{x^4}{4!} - \dots \quad \text{for all } x\]

He noted that the infinite series terms for \(e^{ix}\) would equal the sum of the terms for \(\cos(x) + i\sin(x)\) . From this observation, he concluded Euler’s identity:

\[e^{ix} = \cos(x) + i\sin(x).\]

This result actually followed quite naturally from an assertion by a brilliant young mathematician named Roger Cotes in the early eighteenth century. Cotes (10 July 1682–5 June 1716) was a scientific prodigy and mathematician who worked closely with Newton in editing Newton’s Principia. The son of Robert Cotes, the rector of Burbage, and Grace Cotes (née Farmer), Roger studied at Trinity College, Cambridge, beginning in 1699 and was taken under Newton’s wing. Upon his graduation, he was given a Trinity Fellowship in 1707, and was appointed the Plumian Professor of Astronomy.

Cotes made two observations that were subsequently refined by Euler and by Gauss. First, in the area of mathematics, he noted in 1714 that:

\[ix = \ln(\cos x + i\sin(x)).\]

It is possible that Cotes failed to observe that \(\sin(x)\) and \(\cos(x)\) are periodic functions that cycle continuously between the values of -1 and 1 as x increases. When, in 1740, Euler instead expressed each side as an exponential, he was left with his familiar Euler’s formula, which he immediately recognized as necessarily periodic. Neither discoverers offered the

now familiar interpretation offered by Wessel and Gauss, even though the interpretation is quite conventional today.

Interestingly, Cotes shared with Gauss a vocation in astronomy and, like Gauss, was interested in how observation errors tend to regress with increased observations, rather than multiply. His interest in predicting the movement of planets, given observational error, was the motivation for what would eventually result in the method of least squares.

With this history at hand, let us now explore Gauss’ insights. As asserted by Gauss, a complex number can be represented as the sum of a real component and an imaginary component representing the sum of two vectors on the complex plane. This vector is the sum of the movement in the real direction and imaginary direction. Thus they sum to a + bi. The parameters a and b then represent distances along the real horizontal axis and the imaginary vertical axis (Fig. 2.5).

In polar coordinates, the distance along the horizontal axis is just as we find for the real plane:

Fig. 2.5 The unit circle on the complex plane

\[x = r\cos(\Theta)\] \[y = r\sin(\Theta),\]

where r is equal to 1 in the case of the unit circle. In general, the real parameters (x, y) of a circle follow the identical equation for a circle as in the real plane:

\[r^2 = x^2 + y^2.\]

Notice, too, that this vector, described by a complex number z = x + iy, can be expressed as \(z = r(\cos(\Theta) + i\cos(\Theta))\) which equals \(re^{i\Theta}\) , from Euler’s Formula. We then see a simple property of the multiplication of complex numbers. Multiplying two complex numbers of polar length \(r_1\) and \(r_2\) and angles \(\Theta\) and \(\Psi\) results in a complex number \((r_1 + r_2)e^{i(\Theta+\Psi)}\) . This is equivalent to the original ray scaled up by a length \(r_2\) and rotated by \(\Psi\) .

Another consequence of Gauss’ complex plane is that any position z multiplied by the imaginary number i results in a rotation of the vector z by one quadrant. For instance, consider a point \(\mathbf{z} = a + b\mathbf{i}\) . The product \(\mathbf{i}^*z\) then yields \(a\mathbf{i} + \mathbf{i}^2b = -b + a\mathbf{i}\) , which is equivalent to the 90° rotation of the ray counterclockwise.

Within this unit circle on the complex plane are contained regular polygons of a very simple form. Note that the expression \(z^3=1\) yields the solution to the apexes of a triangle (below), while \(z^4=1\) yields a square and \(z^6=1\) yields a hexagon. For instance, note that \(z^3=1\) can be factored into \((z-1)^*(z^2+z+1)\) , which yields the three roots \(z_1=1\) , \(z_2=(-1+i(\sqrt{3})/2)\) and \(z_3=z_2=\left(-1-i\left(\sqrt{3}\right)/2\right)\) ). For the square, \(z^4=1\) , or \((z^2-1)(z^2+1)=0\) , which yields roots 1, i, -1, -i and the apexes below within the unit circle.

We now have the tools to understand Gauss’ insight. A regular unit k-polygon is simply a k-sided polygon with k unit radii to each apex \(P_j\) , and k sides, beginning with the ray defining the first apex (1,0). Below are examples of rays forming the apexes of a triangle, a square and a hexagon (Fig. 2.6):

Fig. 2.6 Regular polygons in the unit circle on the complex plane

Notice that, for the triangle, the first apex is given by real and imaginary coordinates (1,0), or z0 = ei*0, the second apex is z1 = ei*2π/3 and the third apex by z2 = ei*4π/3. Each apex is rotated by 120°, or 2π/3 radians. Since complex number multiplication for a unit ray simply represents a rotation, each apex is simply the square, or the cube, or the kth power of the first apex. N rotations for an n-sided polygon is then given by zn = 1 = enΘ = cos(nΘ) + isin(nΘ). Then, this description of an n-sided regular polygon developed by Gauss immediately yields De Moivre’s formula:

\[(\cos(\Theta) + i\sin(\Theta))^n = \cos(n\Theta) + i\sin(n\Theta).\]

In fact, much of the mathematics required for Gauss to visualize regular polygons using a complex plane had already been discovered. His miraculous innovation merely required his brilliant 18-year-old mind to recast these observations geometrically in a powerful way that nobody had seen before. Scholars and students alike have appreciated Gauss’ elegant geometric interpretation ever since.

It may have been Gauss’ ignorance that allowed him to pursue and realize his profound discovery. He was not so indoctrinated into what is, and perhaps what could not be, to not explore his most fruitful path. Instead, he became the first to show the confluence and creation of a few different branches of mathematics—Pythagorean geometry, complex numbers and the roots of equations described by zn = 1. By embracing complex numbers, he also verified that an nth degree polynomial indeed has n roots. If we accept such complex roots, the nth root of unity problem and its relationship to polygons are immediately apparent.

Gauss’ next task was to demonstrate that some of these roots can be described using numbers represented by ratios of whole numbers or their square roots, or the so-called constructible polygons that can be drawn only with a compass and an unmarked straightedge. The Greeks had known they could do so for polygons with 3, 4, 5, 6, 8, 10 and 12 sides. Each of these are what we call a Fermat prime number, or a multiple of a Fermat prime number. The next Fermat prime number in the sequence is 17, where a Fermat prime is given by:

\[F_n = 2^{2^n} + 1\]

To prove that a 17-gon is constructible, Gauss had to show he could calculate \(\cos(2\pi/17)\) using only whole numbers and addition, subtraction, multiplication, division and square roots. He showed, correctly, that:

\[16\cos\frac{2\pi}{17} = -1 + \sqrt{17} + \sqrt{34 - 2\sqrt{17}} + 2\sqrt{17 + 3\sqrt{17} - \sqrt{34 - 2\sqrt{17}} - 2\sqrt{34 + 2\sqrt{17}}}.\]

While it took Gauss a couple of years to write his dissertation into a treatise that proved the assertion he made in 1796, and another three years to rewrite it in the form of the published treatise Disquisitiones Arithmeticae, in Latin, in 1801,5 he nonetheless had signaled to the mathematical world his brilliance in solving a 2000-year-old problem.

In Gauss’ first year at the University of Göttingen, he was never fully secure in his personal finances. When he first entered the Collegium in Brunswick, his funding from the Duke was sufficient, but not permanent. He was overjoyed when the Duke agreed to fund his first year of study at Göttingen, but he remained concerned the funding would continue. Having solved a 2000-year-old problem with an incredibly elegant and profound solution, the 18-year-old Gauss’ mathematical credentials were

becoming established. He gained confidence he would be able to earn the continued financial support of Duke Ferdinand. Gauss then went on to complete his degree in mathematics at Göttingen, with the financial support of his patron, Duke Ferdinand.

Children of wealthy families never felt such financial pressure. They may feel a need to perform intellectually as a matter of pride, but not of necessity. Gauss, though, felt a more pecuniary pressure to perform. He was notorious for his hard work, as an antidote to economic insecurity, which, when combined with his almost uncanny ability to see problems in unique and geometrical ways, resulted in insights such as his constructability solution to the 17-gon. And, with each success came a marginally increased confidence in his future funding.

Fortunately, following Gauss’ first year at Göttingen, the Duke agreed to fund his work to the completion of his thesis. Lesser minds may have translated such financial security into reduced incentives to demonstrate their brilliance. Gauss, though, was academically emboldened. He followed up what he considered to be his greatest work with what others who followed may believe were even more substantial contributions.

For instance, Gauss standardized the use of the imaginary number i as a legitimate number that represents the geometric mean between +1

and −1, that is, i = - 1 1 * . ( ) And, in completing his doctoral dissertation at one of Germany’s greatest universities in 1799, at the age of 22, his Disquisitiones Arithmeticae, dedicated to his patron Duke Frederick, Gauss unified the contributions of the great mathematic minds of his era, including Pierre de Fermat (17 August 1601–12 January 1665), Leonhard Euler (15 April 1707–18 September 1783), Joseph-Louis Lagrange (25 January 1736–10 April 1813) and Adrien-Marie Legendre (18 September 1752–10 January 1833). And, he not only introduced the foundations of a new type of complex analysis and many of its first results. Gauss also established the field of number theory. In doing so, he also made a number of assertions, and sometimes proofs that continue to be validated today. But, just as only a handful of people could absorb the unconventional and complex mathematics and physics of Albert Einstein in 1905, few could absorb Gauss’ work in 1799.